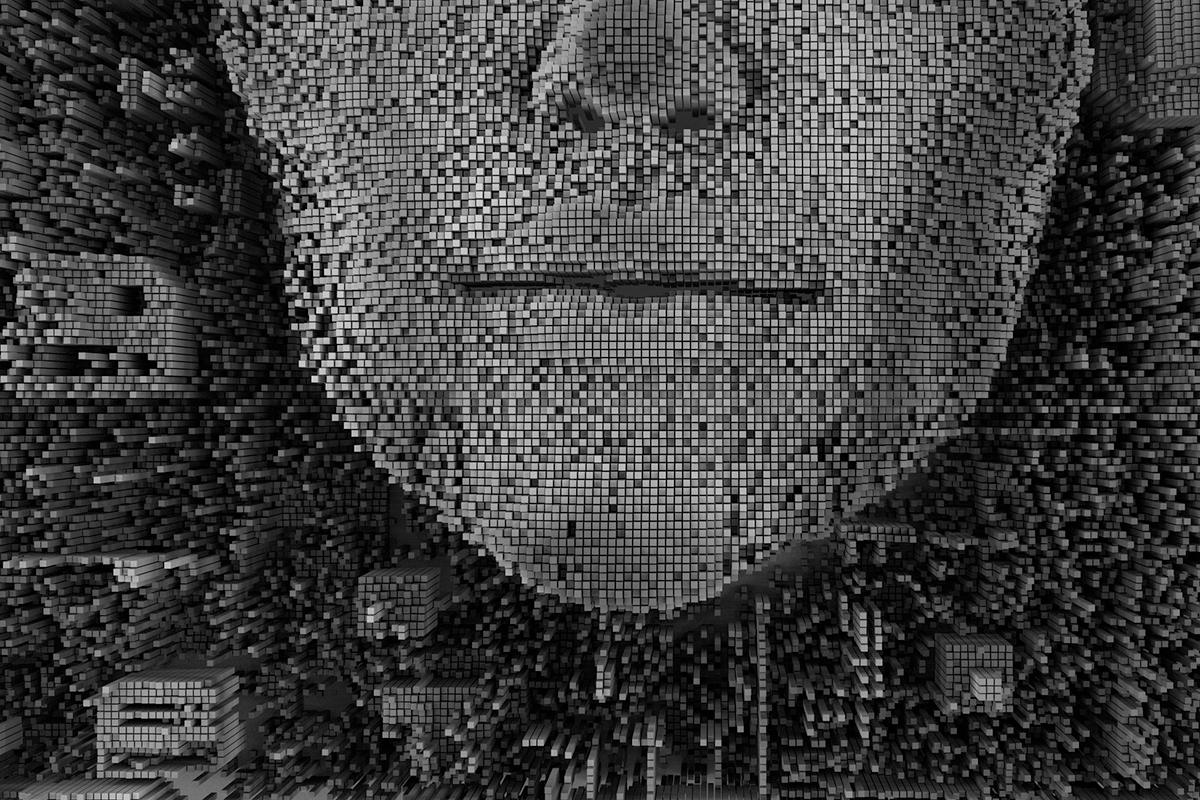

Cyborgs Aren’t Here Yet, But AI Is

By DirectorCorps

August 27, 2019 Technology

Machine learning has created exciting prospects for industry, science and humanity.

It’s also hyped and heavily marketed in misleading ways. There have been some concerns that companies such as Startup Engineer.ai are misleading the public about the extent of their AI capabilities to attract funding and customers. Artificial intelligence is loosely defined, and that’s breeding a lot of confusion.

With conflicting messages, corporate leaders should take some time to learn what AI is, and what it is not. Machine learning is a branch of artificial intelligence based on the idea that systems can learn from data, identify patterns and make decisions with minimal human intervention, according to the analytics and software company SAS Institute. The important distinction is that machine learning systems can operate with “minimal” — but not “no” human intervention.

A venture capitalist in this space, Benedict Evans, writes a fairly digestible description of this important distinction and the problem of AI bias. An analogy he uses is the drug-sniffing dog at airports.

“Machine learning is much better at doing certain things than people, just as a dog is much better at finding drugs than people, but you wouldn’t convict someone on a dog’s evidence. And dogs are much more intelligent than any machine learning,” he wrote.

AI is deployed in the marketplace, and it’s not quite the stuff of science fiction yet. It’s used in spam filters, in Google Maps and in voice recognition software such as Amazon.com’s Alexa and Apple’s Siri. Google Mail now offers three brief, automated responses to any email; users are free to select them to speed up communications or ignore them.

Robo-advisors and other wealth managers use AI to select portfolios. On the back end, companies use AI to detect fraud. Companies such as Mitek Systems use AI for digital identification verification and for mobile banking deposits. Some companies use robotic process automation to quickly scan documents or perform other tasks that slow humans down.

The potential use-cases for AI are much greater than those examples. A few companies are experimenting with driverless cars on roads now across the United States; the technology has the potential to revolutionize driving, not to mention transit management, logistics and other industries. But for now, drivers need to stay alert so they can react should a problem occur. It’s not enough to let the computer make all its own decisions.

“The brittleness of today’s systems means companies must also devote considerable resources towards understanding the situations under which they may fail and constructing the necessary cushioning to minimize the impact of such failures on the applications themselves,” writes Kalev Leetaru, a former senior fellow at the George Washington University Center for Cyber and Homeland Security. Perhaps, he suggests, we should not so much use the term “revolution” when it comes to machine learning as “evolution.”

The cyborgs aren’t here yet.